Hexagonal Architecture in Serverless Functions

Honest take on ports and adapters in a Cloudflare Workers context. When to skip it and when to use it. Spoiler: it's not an all-or-nothing choice.

Table of Contents

I wrote about why I chose Hono over ASP.NET Core not long ago. My main argument: for most APIs, ASP.NET Core brings too much ceremony. Dependency injection, middleware pipelines, Entity Framework. All that before you write a single line of business logic.

But something kept bugging me after I hit publish. If you strip away all the structure, what happens when the code actually gets complex?

Do you just end up reinventing it, one messy function at a time?

I think the honest answer is: sometimes, yes.

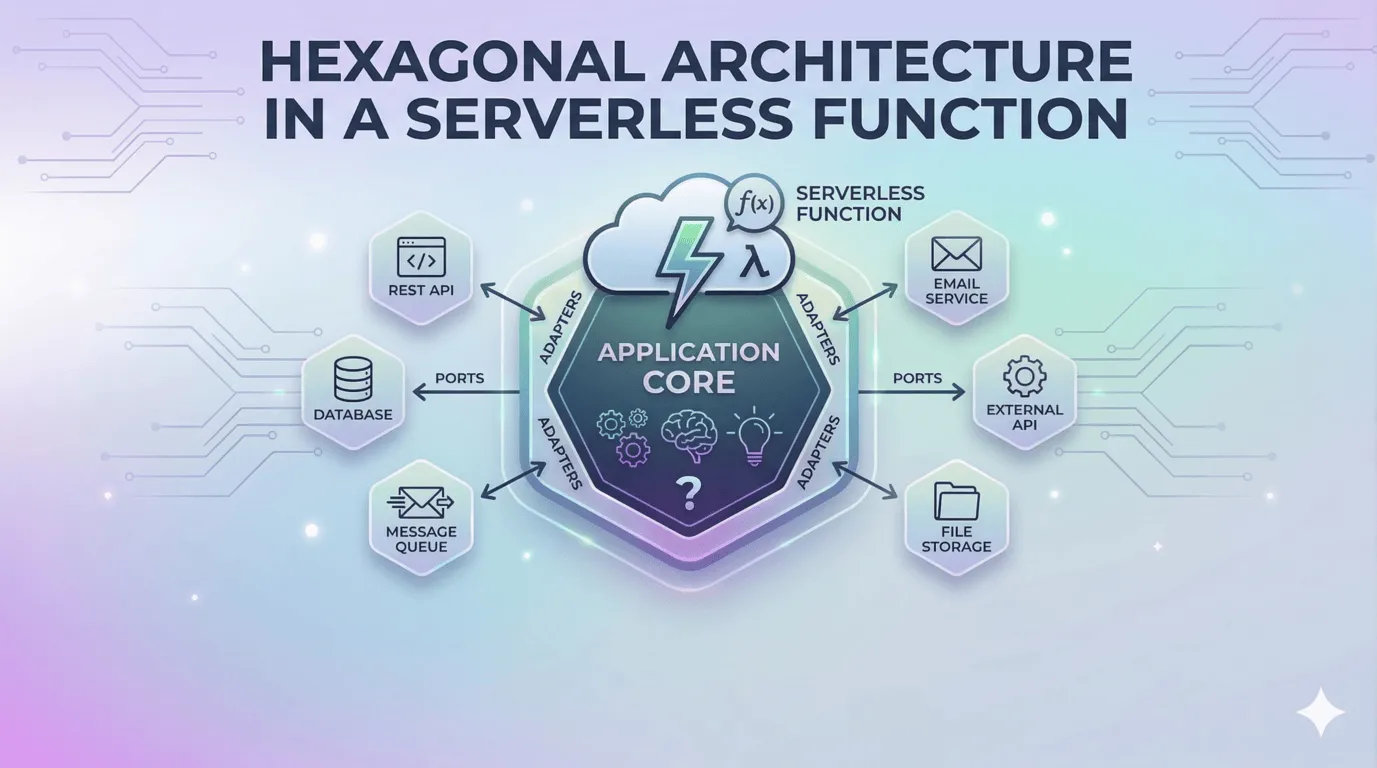

What hexagonal architecture actually is

Hexagonal architecture (also called ports and adapters) is a way of organizing code so your business logic doesn’t know where its data comes from.

Your application defines ports: interfaces that describe what it needs. The outside world provides adapters: concrete implementations of those interfaces.

The idea comes from enterprise software. Java and C# shops have been doing this for decades. It’s closely related to Clean Architecture and the Dependency Inversion Principle.

If you’ve worked with ASP.NET Core, you’ve already used this pattern. Every time you registered a service in Program.cs with builder.Services.AddScoped<IUserRepository, SqlUserRepository>() and injected it through a constructor, that’s ports and adapters. The DI container just does the wiring for you.

So the question is, does any of this belong in a Cloudflare Workers function?

For simple endpoints? No.

When I started building with Hono on Workers, I kept things flat on purpose. A route handler that reads from D1 and returns JSON doesn’t need an abstraction layer.

app.get('/api/users', async (c) => {

const users = await c.env.DB.prepare('SELECT * FROM users').all()

return c.json(users.results)

})

No interface, no adapter, no indirection. You read this and know exactly what it does. Adding a UserRepository interface and a D1UserRepository class would triple the code for no real benefit.

For endpoints like this, hexagonal architecture is overkill.

Then I needed to switch databases

I started a project on D1. It was perfect for the early stage: SQLite at the edge, zero config, fast reads. My handlers talked to D1 directly:

app.get('/api/projects', async (c) => {

const { results } = await c.env.DB

.prepare('SELECT * FROM projects WHERE owner_id = ?')

.bind(c.req.param('userId'))

.all()

return c.json(results)

})

The project grew. I needed things D1 couldn’t give me: complex joins, full-text search, JSONB columns for flexible metadata. Postgres was the obvious next step, so I set up a Neon database. It was built for serverless from the ground up: HTTP-based connections, branching for preview environments, no always-on compute.

But every route handler called D1’s API directly.

The migration meant rewriting every single data access call. Not tweaking, rewriting. c.env.DB.prepare() and Postgres.js sql tagged templates are completely different APIs. Every file, every handler, every query.

And then it clicked. None of my business logic actually cared about D1 or Postgres. It just needed to get projects, save users, update records. I’d coupled every function to a specific database without even thinking about it.

So I pulled the data access behind interfaces:

// ports: just TypeScript types

interface ProjectRepo {

list(ownerId: string): Promise<Project[]>

getById(id: string): Promise<Project | null>

save(project: Project): Promise<void>

}

interface UserRepo {

getByEmail(email: string): Promise<User | null>

save(user: User): Promise<void>

}

Now the business logic works with these interfaces. It has no idea what’s behind them:

async function assignUserToProject(

email: string,

projectId: string,

deps: { users: UserRepo; projects: ProjectRepo }

): Promise<Project> {

const user = await deps.users.getByEmail(email)

if (!user) throw new Error('User not found')

const project = await deps.projects.getById(projectId)

if (!project) throw new Error('Project not found')

project.members.push(user.id)

await deps.projects.save(project)

return project

}

The route handler just wires everything together:

app.post('/api/projects/:id/members', async (c) => {

const { email } = await c.req.json()

const projectId = c.req.param('id')

const project = await assignUserToProject(email, projectId, {

users: new NeonUserRepo(c.env.DATABASE_URL),

projects: new NeonProjectRepo(c.env.DATABASE_URL),

})

return c.json(project)

})

The D1-to-Postgres migration went from “rewrite every handler” to “write new adapter classes, swap them in.” The business logic didn’t change at all. And if I ever move to something else down the road, I write one new adapter.

Your business logic should describe what your app does, not which vendor you’re using.

How ASP.NET Core handles this (and why Workers is different)

If you’re coming from .NET, you might be thinking: we’ve been doing this forever. And you’re right.

ASP.NET Core gives you this pattern out of the box. You register IUserRepository → SqlUserRepository in the DI container, inject it through constructors, and your controllers never touch the database directly. It’s clean.

But it comes with the full ceremony. A DI container with lifetime management (Scoped vs Transient vs Singleton), service registration files that grow with every feature, and a folder structure that gets deep fast. For large applications, that’s a reasonable tradeoff. For a serverless function running at the edge? It’s too much.

Here’s the difference with Hono on Workers: TypeScript gives you everything you need without the framework overhead. Types for your ports. Classes or factory functions for your adapters. Plain function arguments for injection. No container. No decorators. No @Injectable(). Just functions that take their dependencies as arguments.

// ASP.NET Core way: container registration, constructor injection

builder.Services.AddScoped<IProjectRepo, SqlProjectRepo>();

// Hono way: just pass it in

const project = await assignUserToProject(email, projectId, {

users: new NeonUserRepo(c.env.DATABASE_URL),

projects: new NeonProjectRepo(c.env.DATABASE_URL),

})

Same pattern. A fraction of the setup.

Workers already do half of this for you

Here’s what’s interesting about Workers: the runtime already gives you a form of dependency injection.

Every Workers function receives an env object with its bindings: D1 databases, KV namespaces, R2 buckets, secrets, service bindings. You don’t import a global database client, you receive it per request. That’s already one level of indirection.

Hono makes this even cleaner. You access bindings through c.env, which means your route handlers are already decoupled from global state. The framework is pushing you in that direction without making it a whole thing.

So the real question isn’t whether you should use hexagonal architecture in Workers, but how far you take it.

When to use it and when to skip it

After going through that migration, here’s where I’ve landed:

Skip it when:

- The handler is a data pass-through (fetch, maybe transform, return)

- There’s one external dependency

- The function is short and simple

- You’re prototyping and need to move fast

Use it when:

- The handler talks to multiple external systems

- There’s real business logic worth testing on its own

- You’re likely to swap a provider at some point

- Multiple people are working on the same codebase

One dependency? Call it directly. Three or more? You probably want interfaces.

Where adapters tend to pay off

Here are the spots where I keep reaching for it:

- Databases. This one’s obvious after the D1 story. D1, Neon with Postgres.js, Turso, Drizzle. The options keep multiplying. An adapter means you pick the best one today without locking yourself in.

- Email. Resend, Postmark, SendGrid, SES. They all send email. Your business logic shouldn’t care which one.

- Logging and observability.

console.logworks great until you need structured logs in Datadog or Sentry. An adapter lets you start simple and swap in a real provider later without touching business logic. - File storage. R2, S3, local disk in tests. Same operations, different backends.

- Testing. This is the one people underestimate. If your handler calls

c.env.DB.prepare()directly, you need a real database to test it. With an interface, you pass in a fake and test the business logic on its own. No database, no network, no flaky CI.

Notice a pattern? These are all things that you might reasonably switch. If you’re never going to change it, don’t abstract it.

Should you do it?

For most Workers endpoints? No. The simple ones, and there are a lot of them, should stay simple. Don’t add abstractions to a short handler that fetches data and returns JSON.

But for the complex ones, where real logic meets multiple external systems? It’s worth the extra structure. And the serverless version of the pattern is lighter than what you’re used to from .NET or Java. No framework, no container. Just types and function arguments.

I’d recommend starting without it. Write the simplest thing that works. When you feel the pain of coupling, when a migration or a provider swap turns into a rewrite, that’s the moment to introduce the abstraction.

You’ll know when it’s time.